Can Compact Language Models Search Like Agents? Distillation-Guided Policy Optimization for Preserving Agentic RAG Capabilities

ACL 2026

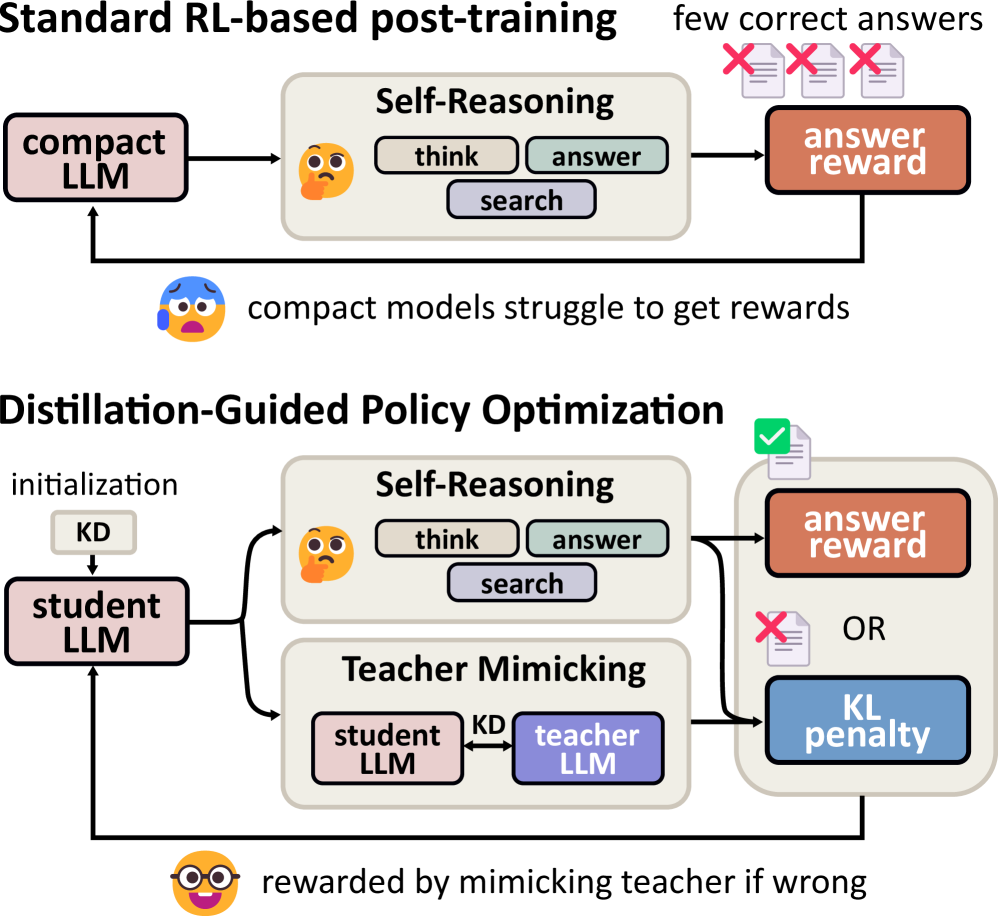

TL;DR We investigate whether compact language models (0.5–1B) can acquire sophisticated agentic retrieval-augmented generation (RAG) behavior. While existing agentic RAG systems rely on multi-billion-parameter models, compact models suffer from poor initial outputs, sparse rewards, and unstable reinforcement learning dynamics. To address these challenges, we propose Distillation-Guided Policy Optimization (DGPO), a two-phase training framework combining cold-start knowledge distillation and selective teacher-guided reinforcement learning.

Overview

This project introduces Distillation-Guided Policy Optimization (DGPO), a reinforcement learning framework designed to unlock agentic retrieval-augmented generation (RAG) capabilities in compact language models (0.5–1B parameters). DGPO stabilizes training via:

- Cold-Start Initialization with Knowledge Distillation (KD) using high-quality teacher-generated trajectories.

- Selective Teacher Guidance during RL—rewarding correct autonomous reasoning while penalizing incorrect outputs via KL divergence against the teacher.

DGPO achieves up to 55× improvement over base compact models and even surpasses its 3B-parameter teacher on several datasets.

Challenges in Compact Agentic RAG

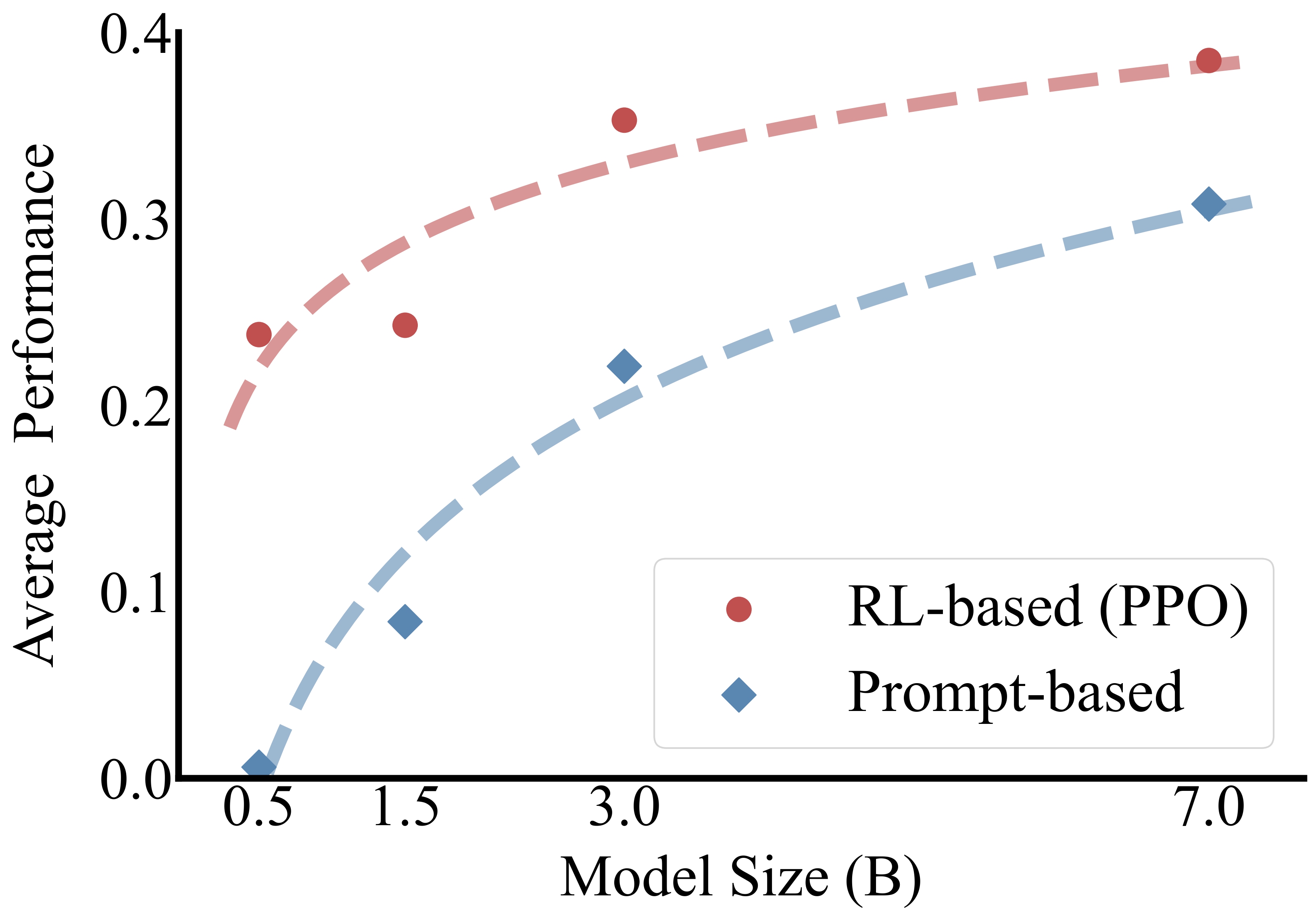

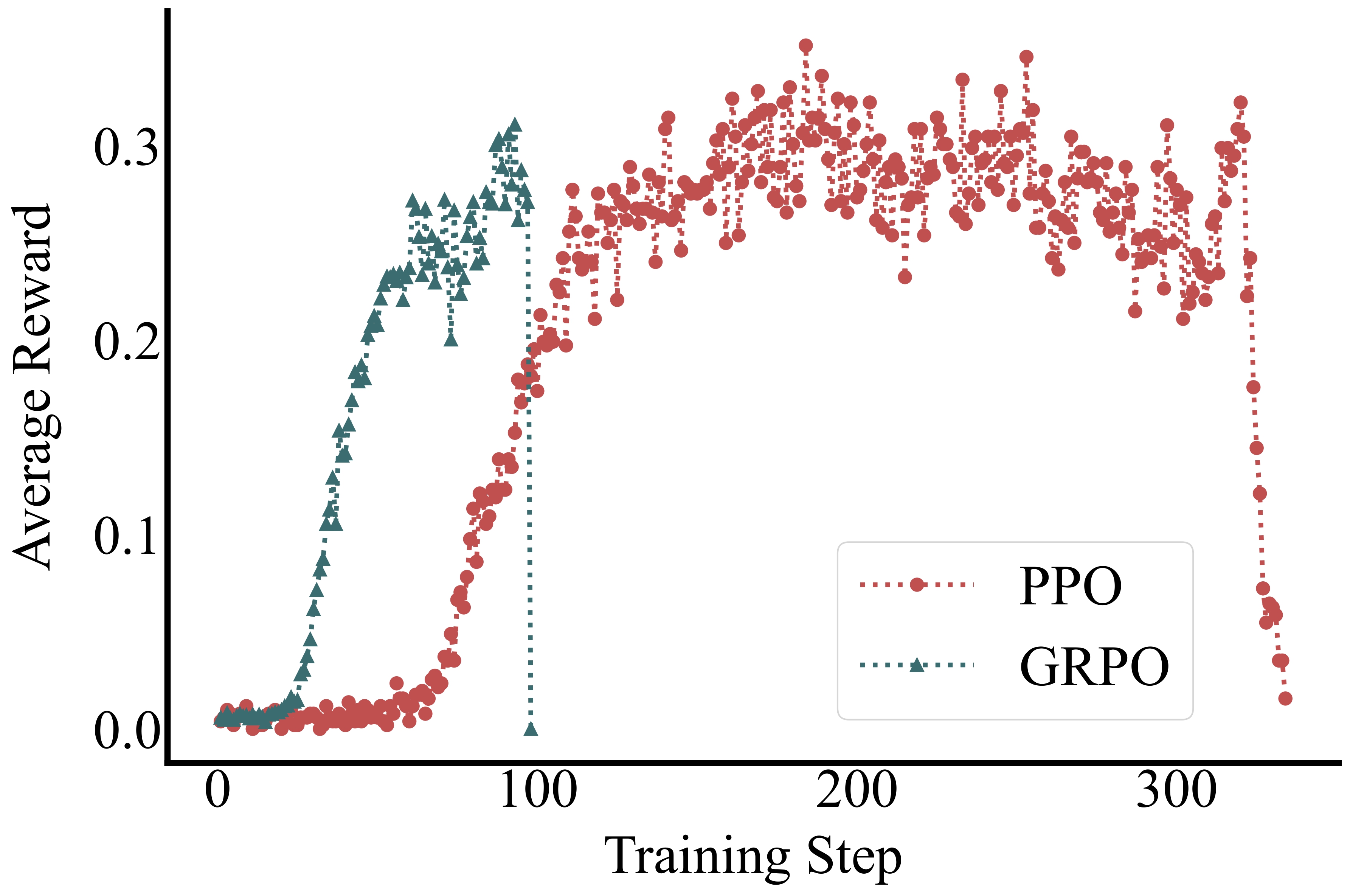

Applying Reinforcement Learning (RL) to compact models presents unique challenges. Unlike larger models, compact models (e.g., 0.5B parameters) exhibit poor initial performance, resulting in sparse rewards and unstable training dynamics.

As shown in the figures below, smaller models lag significantly behind larger counterparts in agentic RAG tasks, and standard RL methods like PPO and GRPO often fail to improve performance or lead to early collapse.

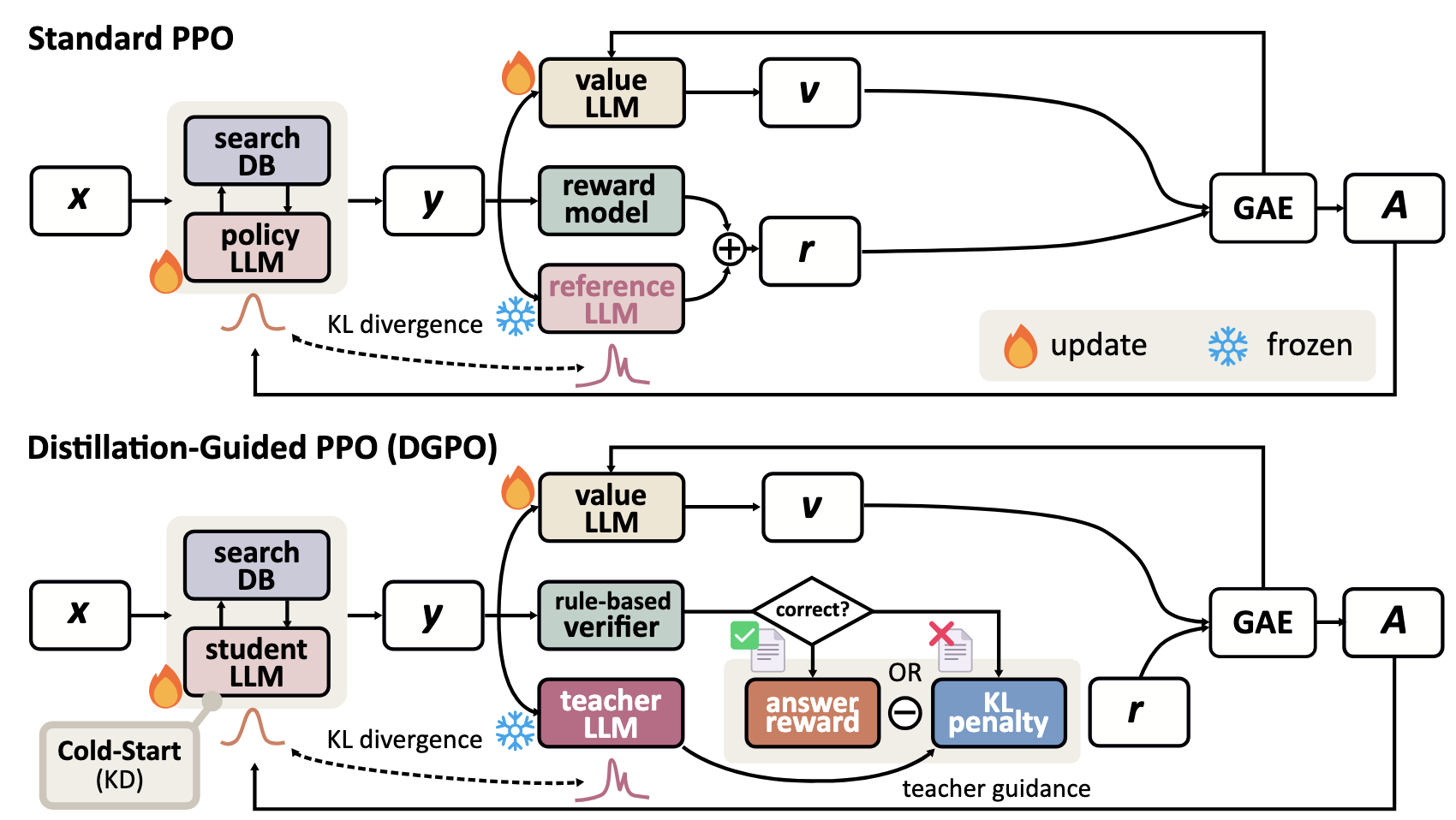

Distillation-Guided Policy Optimization (DGPO)

To overcome these challenges, we propose Distillation-Guided Policy Optimization (DGPO). Our framework operates in two key phases:

- Cold-Start KD Initialization: The student model is initialized by distilling from a teacher's correct trajectories (TGOs). This establishes a stable foundation for reasoning.

- Distillation-Guided RL: We employ a "mimic if wrong, reward if right" strategy.

- Correct Answer: The student receives a reward (r=1) and updates its policy autonomously.

- Incorrect Answer: The student is penalized via KL divergence to mimic the teacher's distribution, effectively using the teacher as an active guide for error correction.

The core of DGPO lies in its selective reward and penalty mechanism during the RL phase:

This objective allows the student to explore when confident but forces alignment with the teacher when it fails.

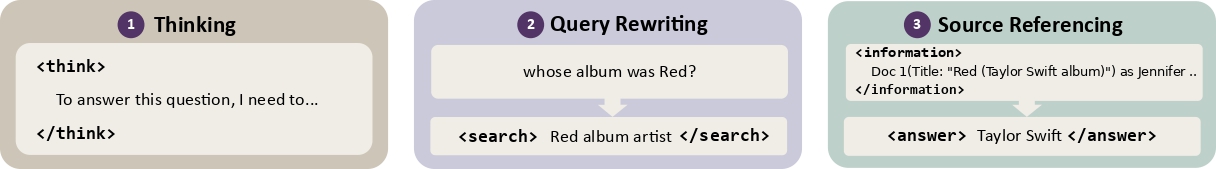

Agentic RAG Capabilities (ARCap) Evaluation

We introduce Agentic RAG Capabilities (ARCap), a fine-grained metric to diagnose how models perform agentic search, rather than just checking final answer accuracy. ARCap evaluates three dimensions:

- Thinking: The ability to plan search steps and synthesize evidence.

- Query Rewriting: The ability to reformulate user questions into effective search queries.

- Source Referencing: The ability to accurately cite and integrate retrieved documents.

Experimental Results

Overall Performance

We evaluated DGPO across seven benchmarks. DGPO consistently outperforms RL baselines (PPO) and distillation baselines. Remarkably, the 0.5B student model trained with DGPO approaches or even surpasses the 3B teacher model on datasets like NQ and HotpotQA.

| Methods | NQ | TriviaQA | PopQA | HotpotQA | 2wiki | MuSiQue | Bamboogle | Avg. |

|---|---|---|---|---|---|---|---|---|

| 🐣 Student-0.5b | 0.004 | 0.006 | 0.007 | 0.007 | 0.015 | 0.000 | 0.000 | 0.006 |

| 🎓 Teacher-3b | 0.365 | 0.569 | 0.393 | 0.340 | 0.368 | 0.135 | 0.298 | 0.353 |

| PPO | 0.306 | 0.444 | 0.379 | 0.205 | 0.218 | 0.041 | 0.073 | 0.238 |

| GKD | 0.266 | 0.408 | 0.358 | 0.216 | 0.217 | 0.055 | 0.161 | 0.240 |

| SeqKD | 0.331 | 0.416 | 0.364 | 0.283 | 0.273 | 0.089 | 0.169 | 0.275 |

| KD | 0.331 | 0.431 | 0.373 | 0.286 | 0.284 | 0.091 | 0.290 | 0.298 |

| DistiLLM | 0.333 | 0.442 | 0.373 | 0.288 | 0.270 | 0.095 | 0.209 | 0.287 |

| TAID | 0.325 | 0.427 | 0.365 | 0.290 | 0.270 | 0.079 | 0.218 | 0.282 |

| DGPO (ours) | 0.378 🏅 | 0.481 | 0.402 🏅 | 0.342 🏅 | 0.303 | 0.120 | 0.274 | 0.329 |

Agentic RAG Capabilities (ARCap) Analysis

We utilized the ARCap framework to diagnose how DGPO improves agentic behaviors compared to the teacher and baselines.

- (a) Source Referencing: DGPO provides strong information extraction when the correct evidence is directly available.

- (b) Query Rewriting: DGPO achieves teacher-level query rewriting.

- (c) Thinking: DGPO exhibits the strongest multi-hop reasoning by taking more search steps than the teacher model.

| Models | NQ (Single-hop) | MuSiQue (Multi-hop) | ||

|---|---|---|---|---|

| w/o think | w/ think | w/o think | w/ think | |

| Student-0.5B | 0.386 | 0.034 | 0.166 | 0.013 |

| Teacher-3B | 0.589 | 0.560 | 0.413 | 0.357 |

| PPO | 0.547 | 0.581 | 0.258 | 0.242 |

| KD | 0.540 | 0.544 | 0.321 | 0.256 |

| DGPO (Ours) | 0.565 | 0.593 | 0.312 | 0.287 |

| Models | NQ (1-hop) | MuSiQue (Multi-hop) | |

|---|---|---|---|

| Hit Ratio | Hit Ratio | Search Steps | |

| Student-0.5B | 0.004 | 0.052 | 3.86 |

| Teacher-3B | 0.682 | 0.668 | 1.60 |

| PPO | 0.711 | 0.568 | 1.68 |

| KD | 0.675 | 0.570 | 2.45 |

| DGPO (Ours) | 0.682 | 0.583 | 2.64 |

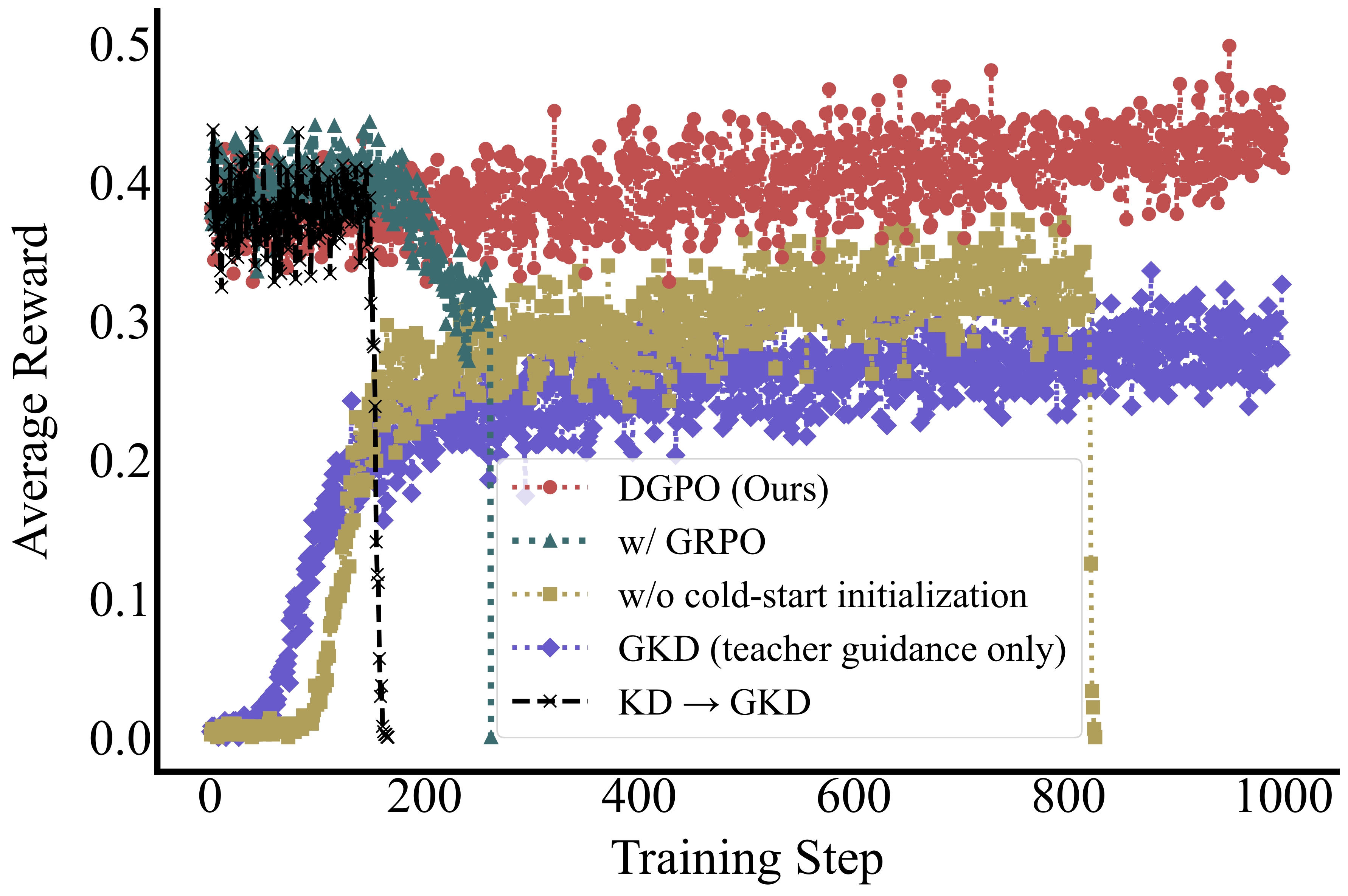

Training Stability

DGPO maintains stable learning curves well beyond where other methods collapse. As shown below, while GRPO and standard PPO struggle with the 0.5B model, DGPO sustains performance gains up to 1000 training steps.

Acknowledgement

This work was supported by JST AIP Acceleration Research, Japan, Grant Number JPMJCR23U2 and JST PRESTO, Japan, Grant Number JPMJPR2518.

Contact

Citation

@inproceedings{kotoge2026dgpo,

title = "Can Compact Language Models Search Like Agents? Distillation-Guided Policy Optimization for Preserving Agentic RAG Capabilities",

author = "Kotoge, Rikuto and Nishimura, Mai and Ma, Jiaxin",

booktitle = "Proceedings of the 64th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = jul,

year = "2026",

publisher = "Association for Computational Linguistics",

}

@inproceedings{kotoge2025democratizing,

title = "Democratizing Agentic {RAG}: Distillation-Guided Policy Optimization for Compact Language Models",

author = "Kotoge, Rikuto and Nishimura, Mai and Ma, Jiaxin",

booktitle = "NeurIPS 2025 Workshop on Bridging Language, Agent, and World Models for Reasoning and Planning",

year = "2025",

url = "https://openreview.net/forum?id=CP0H9NAWES",

}